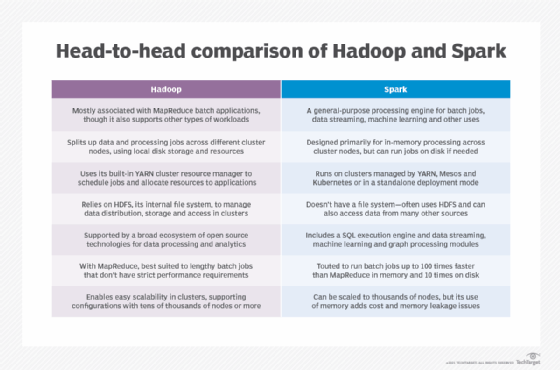

It provides complete recovery using lineage graph whenever something goes wrong.Ī sparse vector has two parallel arrays –one for indices and the other for values.Spark is easier to program as it comes with an interactive mode.Spark is 100 times faster than Hadoop for big data processing as it stores the data in-memory, by placing it in Resilient Distributed Databases (RDD).Simplicity, Flexibility and Performance are the major advantages of using Spark over Hadoop. Supports real-time processing through spark streaming. Spark caches data in-memory and ensures low latency. Let’s save data on memory with the use of RDD’s. Question 3: Compare Spark vs Hadoop MapReduce Criteriaĭoes not leverage the memory of the hadoop cluster to maximum. Stream Processing – For processing logs and detecting frauds in live streams for alerts, Apache Spark is the best solution.Spark is preferred over Hadoop for real time querying of data.Sensor Data Processing –Apache Spark’s ‘In-memory computing’ works best here, as data is retrieved and combined from different sources.Question 2: List some use cases where Spark outperforms Hadoop in processing. Shark tool helps data users run Hive on Spark – offering compatibility with Hive metastore, queries and data. Shark is a tool, developed for people who are from a database background – to access Scala MLib capabilities through Hive like SQL interface. Most of the data users know only SQL and are not good at programming.

Most commonly Apache Spark Interview questions that are to be asked during the interview are as follows: Some of these problems may only have to be described simply and succinctly, while others will have to say more about experiences. Consider if it makes sense to tell from experience when answering questions. Spark questions can involve demonstrable system awareness, so consider reviewing Apache Spark ‘s programming and providing examples of functions you have successfully completed. As a Spark practitioner, much of the interview is possibly about questions and responses to the Spark interview, but you should also be able to answer general interview questions more broadly. The Apache Spark questions are primarily technical in interviews with the goal of knowing your information functions and processes. Go through these questions for the Apache Spark interview to prepare for work interviews to get you started in your big data career: What you expect from a Spark interview? Will you like to make a difference in your career? See the Top Technology Trends Report.Īs a Big Data professional, understanding all the terminology and technologies associated with this field is important to you, including Apache Spark, one of the most common technology in the Big Data sector.

Taking the top questions for Apache Spark interviews, prepare them to take stock of the evolving large data market, in which big or small global and local companies pursue high-quality big data and hadoop experts. Big Data and Company (BDA) worldwide sales will increase from 130,1 billion dollars in 2016 to over 203 billion dollars in 2020 (source IDC). The year in which the broad data, research and emerging technology, the decision-making processes based on data and the analysis of results have made considerable progress. We give you 100 top questions and answers for Spark ‘s interviews. 100 Most Repeated Apache Spark Interview Questions & Answers You should must prepare 100 Apache Spark Interview Questions & Answer in 2021ĭo you want your Apache Spark skills to get a job, do you? How ambitious!-How ambitious! Are you ready, are you ready? First, you’ll need to get the job.

0 kommentar(er)

0 kommentar(er)